Documentation Index

Fetch the complete documentation index at: https://astronomer-preview.mintlify.app/llms.txt

Use this file to discover all available pages before exploring further.

Prerequisites

- A user account on Azure with access to Azure cloud resources.

- The Azure CLI.

- The Astro CLI.

- An Astro project.

- If you’re using Windows, Windows Subsystem Linux.

Retrieve Azure user credentials locally

Run the following command to obtain your user credentials locally:- Linux:

$HOME/.azure/ - Mac:

/Users/<username>/.azure - Windows:

%USERPROFILE%/.azure/

Configure your Astro project

For Airflow 3, use the provided

docker-compose.override.yml. For Airflow 2, replaceapi-serverwithwebserverand remove thedag-processorblock..azure folder as a volume in Docker.

- In your Astro project, create a file named

docker-compose.override.ymlwith the following configuration:

- Mac

- Windows and Linux

- Add the following lines after the

FROMline in yourDockerfileto install the Azure CLI inside your Astro Runtime image:

- Add the following environment variable to your

.envfile. Make sure the file path is the same volume location you configured indocker-compose.override.yml:

- Mounted user credentials in

/~/.azure. - Configurations in

azure_client_id,azure_tenant_id, andazure_client_secret. - An explicit username & password provided in the connection.

~/.azure/config.

Test your credentials with a secrets backend

Now that Airflow has access to your user credentials, you can use them to connect to your cloud services. Use the following example setup to test your credentials by pulling values from different secrets backends.-

Create a secret for an Airflow variable or connection in Azure Key Vault. All Airflow variables and connection keys must be prefixed with the following strings respectively:

airflow-variables-<my_variable_name>airflow-connections-<my_connection_name>

mysecretvarin your dag, you must name the secretairflow-variables-mysecretvar. You will need to store your connection in URI format. -

In your Astro project, add the following line to Astro project

requirements.txtfile: -

Add the following environment variables to your Astro project

.envfile. For additional configuration options, see the Apache Airflow documentation. Make sure to specify yourvault_url.By default, this setup requires that you prefix any secret names in Key Vault withairflow-connectionsorairflow-variables. If you don’t want to use prefixes in your Key Vault secret names, set the values for"connections_prefix"and"variables_prefix"to""withinAIRFLOW__SECRETS__BACKEND_KWARGS. Thevault_urlcan be found on the overview page of your Key vault underVault URI. -

Run the following command to start Airflow locally:

-

Access the Airflow UI at

localhost:8080and create an Airflow Azure connection namedazure_standardwith no credentials. See Connections. When you use this connection in your dag, it will fall back to using your configured user credentials. -

Add a dag which uses the secrets backend to your Astro project

dagsdirectory. You can use the following example dag to retrieve a value fromairflow/variablesand print it to the terminal: - In the Airflow UI, unpause your dag and click Play to trigger a dag run.

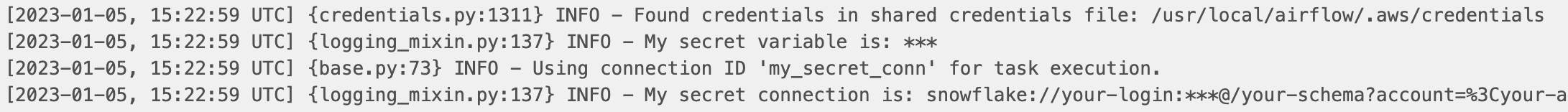

- View logs for your dag run. If the connection was successful, your masked secrets appear in your logs. See Airflow logging.