Deploy Airflow images and Dags to Astro Private Cloud using CI/CD pipelines. This guide covers deployment options via CLI, API, and common CI/CD platforms.Documentation Index

Fetch the complete documentation index at: https://astronomer-preview.mintlify.app/llms.txt

Use this file to discover all available pages before exploring further.

Benefits of CI/CD for Airflow deployments

Deploying Dags and other changes via CI/CD workflows provides:- Streamlined development: Deploy new and updated Dags efficiently across team members.

- Faster error response: Decrease maintenance costs and respond quickly to failures.

- Improved code quality: Enforce continuous automated testing to protect production Dags.

Deployment methods

Astro Private Cloud supports multiple deployment methods. The Astro CLI approach is recommended for most use cases due to its simplicity.CLI deployment

The Astro CLI provides the simplest way to deploy to Astro Private Cloud from CI/CD pipelines. Build and deploy an image:astro deploy:

--dags: Deploy only yourdagsfolder. Works only if dag-only deploys are enabled for the Deployment.--image-name <custom-image>: The name of a pre-built custom Docker image to use with your project. The image must be available on your local machine. If specified, building the image is skipped.--remote: Directly point the Deployment to the remote image and skip pushing the image. Use with--image-name.--runtime-version <version>: Specify the Runtime version of your image. Use with--image-name.--force: Force deploy even if your project contains errors or uncommitted changes. Use with caution in CI/CD pipelines, as it bypasses the safeguard that ensures only committed code is deployed.--description "<text>": Attach a description to a code deploy for traceability. If not provided, the system automatically assigns a default description based on deploy type.

API deployment

For advanced automation scenarios, you can use the Houston API’supsertDeployment mutation to deploy a pre-built image to a Deployment. This approach is useful when you need to integrate with systems that can’t use the Astro CLI directly.

releaseName: The release name of your Deployment, following the patternspaceyword-spaceyword-4digits. For example,infrared-photon-7780.image: The full image path including registry, repository, and tag. The image must be accessible from your Astro Private Cloud data plane.runtimeVersion: The Astro Runtime version that the image is based on. For example,12.1.0.deployRevisionDescription: An optional description for the deploy revision, useful for tracking deploys in the Astro Private Cloud UI.

CI/CD platform examples

The following examples show how to implement CI/CD pipelines using the Astro CLI with popular CI/CD platforms. For advanced Docker registry-based deployment examples, see Advanced: Docker registry deployment.GitHub Actions

GitLab CI

CircleCI

Example CI/CD workflow

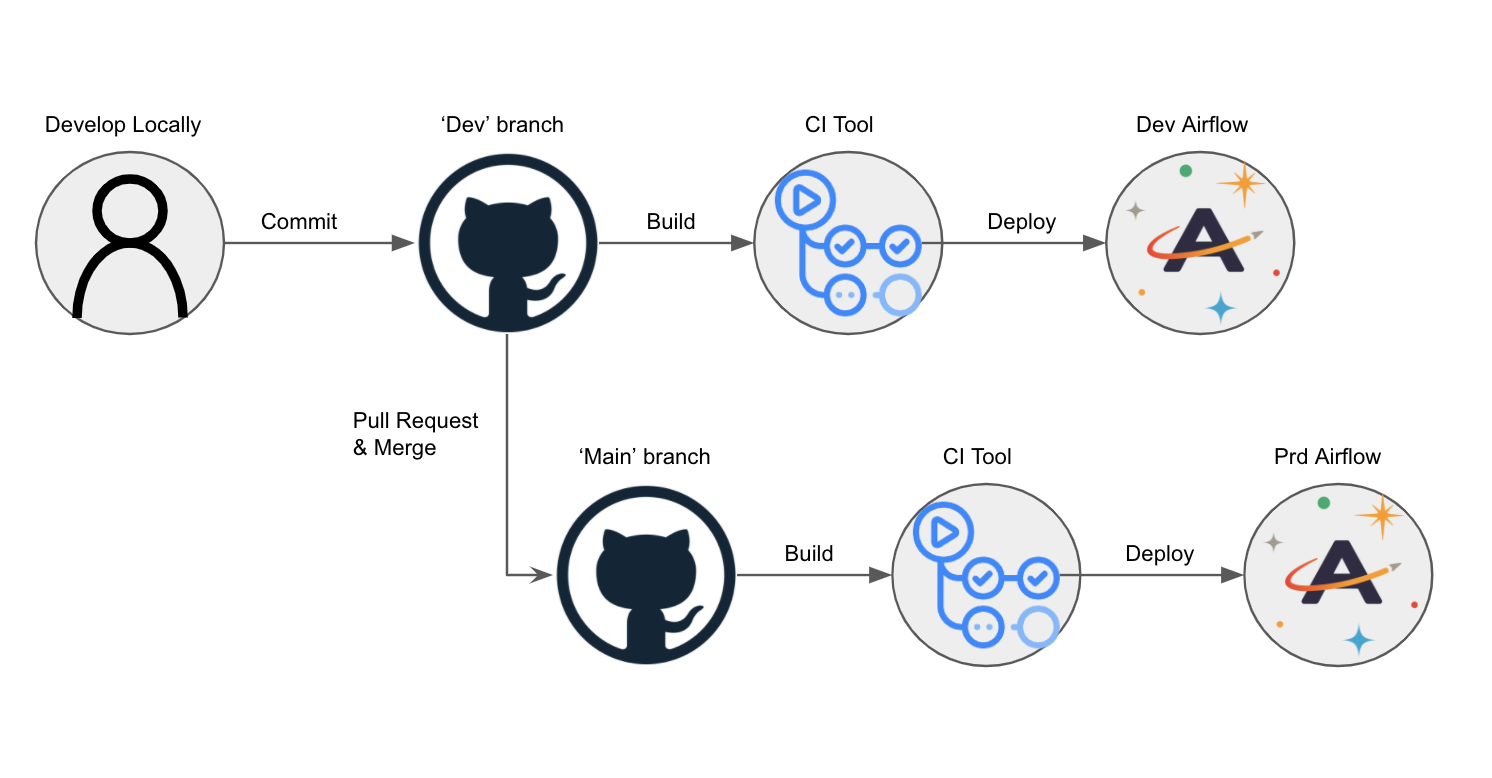

Consider an Astro project hosted on GitHub and deployed to Astro Private Cloud. In this scenario,dev and main branches of an Astro project are hosted on a single GitHub repository, and dev and prod Airflow Deployments are hosted on an Astronomer Workspace.

Using CI/CD, you can automatically deploy Dags to your Airflow Deployment by pushing or merging code to a corresponding branch in GitHub. The general setup:

- Create two Airflow Deployments within your Astronomer Workspace, one for

devand one forprod. - Create a repository in GitHub that hosts project code for all Airflow Deployments within your Astronomer Workspace.

- In your GitHub code repository, create a

devbranch off of yourmainbranch. - Configure your CI/CD tool to deploy to your

devAirflow Deployment whenever you push to yourdevbranch, and to deploy to yourprodAirflow Deployment whenever you merge yourdevbranch intomain.

Service account authentication

Prerequisites

Before completing this setup, ensure you:- Have access to a running Astro Deployment.

- Installed the Astro CLI.

- Are familiar with your CI/CD tool of choice.

Create a service account

To authenticate your CI/CD pipeline to the Astronomer private Docker registry, create a service account and grant it an appropriate set of permissions. You can do so using the Astro Private Cloud UI or CLI. After creation, you can delete this service account at any time. In both cases, creating a service account generates an API key for the CI/CD process. You can create service accounts at the:- Workspace level: Allows you to deploy to multiple Airflow Deployments with one code push.

- Deployment level: Ensures that your CI/CD pipeline only deploys to one particular Deployment.

Create a service account using the CLI

Deployment level service account: First, get your Deployment ID:Create a service account using the API

You can also create a service account using the GraphQL API. ThedeploymentUuid field is the same Deployment ID (UUID) returned by astro deployment list.

Create a service account using the Astro Private Cloud UI

If you prefer to provision a service account through the Astro Private Cloud UI:- Log into Astronomer and navigate to:

Deployment>Service Accounts - Configure your service account:

- Give it a Name

- Give it a Category (optional)

- Grant it a User Role (must be “Editor” or “Admin” to deploy code)

- Copy the API key that is generated

The API key is only visible during the session. Store it securely in an environment variable or secret management tool.

Set credentials in CI/CD environment

After creating a service account, set the credentials in your CI/CD environment:Best practices

- Use service accounts for CI/CD authentication instead of personal credentials.

- Store credentials securely in CI/CD secrets or environment variables.

- Deploy only committed code in CI/CD pipelines to ensure reproducibility. Avoid using

--forceunless you have a specific reason to bypass the git commit check. - Add deployment descriptions with

--descriptionfor audit trail and version tracking. - Test in staging before production Deployment to catch issues early. For guidance on writing Dags that work across environments, see Manage Airflow code and Dag writing best practices.

- Use Dag-only deploys when you only need to update Dag files without rebuilding images.

Advanced: Docker registry deployment

For advanced use cases, legacy systems, or when you need more control over the Docker build and push process, you can deploy directly to the Astronomer Docker registry. Most users should use the CLI deployment method instead. When to use Docker registry deployment:- You need custom Docker build processes or multi-stage builds.

- You’re integrating with existing Docker-based CI/CD workflows.

- You require fine-grained control over image tagging and versioning.

- You’re working with legacy CI/CD systems that don’t support the Astro CLI.

If you’re using BuildKit with theBuildx plugin, you need to add the

--provenance=falseflag to yourdocker buildx buildcommands.The Docker registry examples use

RELEASE_NAME(for example,infrared-photon-7780) instead ofDEPLOYMENT_ID. Both refer to your Astro Deployment, but the Astro CLI usesDEPLOYMENT_IDwhile the Docker registry approach uses the release name.Authenticate and push to Docker

The first step of this pipeline authenticates against the Docker registry that stores an individual Docker image for every code push or configuration change:BASE_DOMAIN= The domain at which your Astro Private Cloud instance is runningAPI_KEY_SECRET= The API key that you got from the CLI or the UI and stored in your secret manager

Build and push an image

After you are authenticated, you can build, tag, and push your Airflow image to the private registry, where a webhook triggers an update to your Astro Deployment.To deploy successfully to Astro Private Cloud, the version in the

FROMstatement of your project’s Dockerfile must bethe same as or newer thanthe Runtime version of your Astro Deployment. For more information on upgrading, seeUpgrade Airflow.- Registry Address: Tells Docker where to push images. On Astro Private Cloud, your private registry is located at

registry.${BASE_DOMAIN}. - Release Name: The release name of your Astro Deployment, following the pattern

spaceyword-spaceyword-4digits(for example,infrared-photon-7780). - Tag Name: Each deploy generates a Docker image with a corresponding tag. If you deploy via the CLI, the tag defaults to

deploy-n, withnrepresenting the number of deploys. For CI/CD, customize this tag to include the source and build number.

Run unit tests

For CI/CD pipelines that push code to a production Deployment, Astronomer recommends adding a unit test after the image build step to ensure that you don’t push a Docker image with breaking changes. To run a basic unit test, add a step in your CI/CD pipeline that executesdocker run and then runs pytest tests in a container based on your newly built image before it’s pushed to your registry. For guidance on writing pytest tests for Airflow, including Dag validation tests and unit tests for custom operators, see Test Airflow Dags.

For example, you can add the following command as a step in your CI/CD pipeline:

BASE_DOMAIN,RELEASE_NAME, andBUILD_NUMBERshould be set as environment variables in your CI/CD tool.Configure your CI/CD pipeline

Depending on your CI/CD tool, configuration varies slightly. This section focuses on outlining what needs to be accomplished, not the specifics of how. At its core, your CI/CD pipeline first authenticates to the Astronomer private registry, then builds, tags, and pushes your Docker image to that registry.Docker registry example: GitHub Actions

This example shows how to implement CI/CD using GitHub Actions with Docker registry deployment for both development and production environments. Setup steps:-

Create a GitHub repository for your Astro project with

devandmainbranches. - Create two Deployment-level service accounts: one for Dev and one for Production.

-

Add service accounts as GitHub secrets named

SERVICE_ACCOUNT_KEYandSERVICE_ACCOUNT_KEY_DEV. -

Create a GitHub Action with the following workflow:

<dev-release-name> and <prod-release-name> with your Deployment release names.

- Test the workflow by committing changes to

devto update your development Deployment, then mergedevintomainvia pull request to update production.

The prod-push action only runs after merging a pull request. To further restrict this pipeline, add branch protection settings in GitHub to prevent direct pushes to

main.Additional Docker registry examples

The following sections provide templates for configuring CI/CD pipelines using popular CI/CD tools with Docker registry deployment. Each template can be customized to manage multiple branches or Deployments based on your needs.DroneCI

CircleCI

Jenkins

Bitbucket

If you are using Bitbucket, this script should work (courtesy of our friends at Das42)GitLab

AWS CodeBuild

Azure DevOps

This example shows how to automatically deploy your Astro project from a GitHub repository using an Azure DevOps pipeline. Prerequisites:- A GitHub repository hosting your Astro project.

- An Azure DevOps account with permissions to create new pipelines.

-

Create a file called

astro-devops-cicd.yamlin your Astro project repository: -

Follow the steps in Azure documentation to link your GitHub repository to an Azure pipeline. When prompted for the source code for your pipeline, specify that you have an existing Azure Pipelines YAML file and provide the file path:

astro-devops-cicd.yaml. - Finish and save your Azure pipeline setup.

-

In Azure, add environment variables for the following values:

BASE-DOMAIN: Your base domain for Astro Private CloudRELEASE-NAME: The release name for your DeploymentSVC-ACCT-KEY: The service account key you created for CI/CD (mark as secret)